InetSoft Webinar: Machine Learning Analytics Company

Below is the continuation of the transcript of a Webinar hosted by InetSoft on the topic of What Machine Learning Means for Company Analytics. The presenter is Abhishek Gupta, Chief Data Scientist at InetSoft.

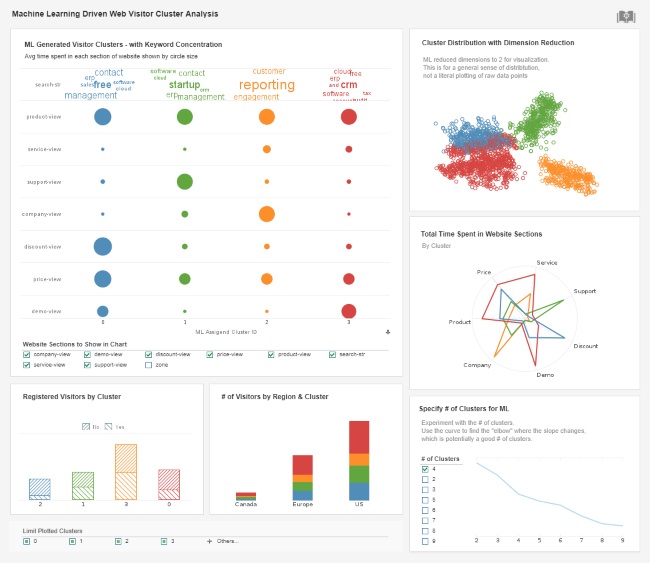

As a machine learning analytics company, we tend to focus on automation, time savings, and something that we are going to talk about in more detail later, graphical interfaces that can bring people who know more about the business closer to the data.

Proprietary solutions also tend to focus on the deployment of models. We were talking about one sort of workflow which when the data scientist develops the model and then hands it off to a programmer to deploy the model. Well, we see InetSoft and other proprietary solutions working towards a one click sort of deploy button, and what a time savings that can be for an organization.

What we want to look for and things that we've done is how do we feed the creativity of data scientists, and we think that allowing for bi-directional integration with open source products is one way. I can be in InetSoft, and make calls out to Open Source. Then we're talking about things like using the open standard of a PMML.

I think it's really silly, and I do see less and less of this, thank God, people debating is R better than Python better than InetSoft? These are not productive discussions, I don't think. I mean I think it's good to know which tool to use for what. I certainly think that's good. I teach a data mining class and where I expose this to my students. Know which tool saves you the most time at what point of the process. I think that's more important.

The algorithm economy, how that is going to evolve in terms of how algorithms come into usage in a particular organization that's changing, and InetSoft is changing with it. A lot of organizations are changing with it, but I think the companies themselves and the data scientists themselves need to realize what that means and embrace it all.

It doesn't mean you're going to have a spaghetti of everything within the organization. That's just not efficient either. Open source technologies are going to be in there for the long run, and I think time proves which ones are there for the long run. It makes sense for organizations like InetSoft to go ahead and invest in those as well as we're doing with the two sided integration to Open Source technologies that you described just now.

Let's push forward. I think the typical drawback of Open Source software tends, and this is not always true, but it tends to provide less graphical interfaces, less deployment utilities, less support. I think this stuff is obvious to people at this point. Another drawback is with less sophisticated deployment utilities, if it's taking three months to deploy a model, there are certainly instances where that model's going to be out of date in the next production. And I think that three months is fairly common for open source only solutions.

The other concept that we definitely wanted to talk about is making analytics accessible, approachable, digestible for a wider range of users, and why is that? People are calling them citizen data scientists. I know there are a lot of people who don't like that term. A lot of people do like that term. The fact is, that term is out there, and it means something pretty specific.

That's why I chose to put that on here. I think enabling people to get their hands onto real analytics in a very digestible way has a lot of positive benefits for everything we're trying to do here. First of all, those people become an army of informed business analyst who are looking for pockets of potential in the data. I think any data scientist knows the challenges of looking at all the data are in terms of trying to figure out what's in there.

Those citizen data scientists can go out there and do some of that might work and say, hey look this data is giving some lift here. I'm not just talking about sophisticated data science. I'm talking about being able to build models.

There's another key advantage to that. The more we have business people actually playing with models, building models, not necessarily the ones that are going to be deployed in the field, the more they understand it, the more they're going to realize what models can do, what the limitations of models are, and really understand how to fit them into business processes better.

It's almost like an education perspective that accessible analytics can bring. These people aren't the ones who want to code, so it has to be very intuitive, very quick. They don't want to wait around for the results. That's why we leverage in memory architectures to do this, and it has to give intuitive results, very visual results.

This is hopefully going to address some of the significant math or stats trauma that I think is still prevalent amongst a lot of business people. The fact that, oh, these factors are going to predict that particular business phenomena, and the math behind it isn't necessarily is important for them to comprehend.

They just need to see what the model is doing at that higher level, and then they can pass their insights off to a data scientist who's going to build the robust production model. Those are the three things that I think that this accessible analytics really provides. The ability to collaborate with those different profiles, comprehension on the side of the people who maybe aren't as mathematically or statistically inclined and really creating a pot for innovation.