Cube of Data in Server Memory

This is the continuation of the transcript of the DM Radio show "Avoiding Bottlenecks and Hurdles in Data Delivery". InetSoft's Principal Technologist, Kristina Bagrova, joined industry analysts and other data management software vendors for a discussion about current issues and solutions for information management.

Eric Kavanagh: Yeah that’s interesting. Philip, have you come across that terminology, or that kind of thing recently?

Philip Russom: Well, as you mentioned materialized views, that technology has been with us for decades, right? It's just that in its early incarnation, say in the mid 90s, it was kind of a limited technology, and especially performance was an issue. Refreshing the view, instantiating data into the view, it was kind of slow.

Thank God these kind of speed bottlenecks have been cleared as the database vendors themselves have worked out virtual tables, or you have standalone vendors who are working on this obviously. Denodo and Composite have both made contributions there. So yeah, this new technology is something that a lot of us wanted in the 90’s. It just didn’t work very well, and luckily today it works pretty good.

Ian Pestel: Each of user or even extending beyond these individual databases where you could even take a mess up across down resources, pulling feeds, pulling various databases together and then makes that as well so it's okay.

Jim Ericson: Yeah, let me provide a connection to that. We haven’t had a chance today to talk about in memory databases, and I am finding in memory databases to be a way to get around certain performance bottlenecks, so I want to introduce that to our talk today. I am also seeing in memory databases. These were stuff like operational business intelligence or performance management.

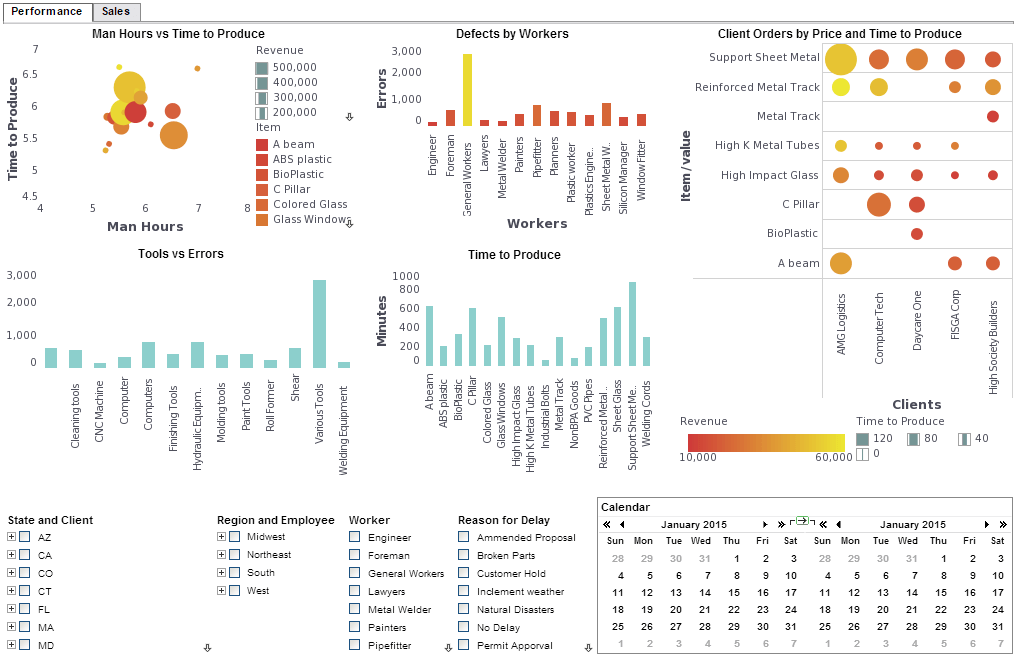

It might involve OLAP, or imagine a cube of data in server memory. It's refreshed very frequently, maybe some of it in real-time. It's so that business managers can refresh their management dashboards based on that data, and manage in a very granular, a very short timeframe kind of fashion. And so this is possible by some of the advancements in hardware.

A lot of this is Moore’s Law in action. A lot of the technology advancements I was talking about earlier are actually at the hardware level. So as we have gone from 32 bit to 64 bit systems. Companies finally are replacing the old 32 bit equipment and are going to 64 bit. They have got the giant memory space of 64 bit so they are able to do a lot in memory. And so I am saying federation, I mean basically this in memory database is really federated, and it's virtualized, right. I am seeing that the memory was a way to get around bottlenecks.

Philip Russom: Yeah, your task system is part of that virtualized layer like when I am doing that immediate processing and wonder what do I store. And the problem is how do I manage it, right? So the goal here is to provide you a layer of technology semantically that place of stack so I can store it, but how do I manage it, how do I create it easily? How do I flexibly change the scheme? How do I get information incrementally in and out of it?

So it becomes an intermediate layer that you are going to be able to store, and that’s very fast, but they can manage that, and that’s the critical element that people sort of forget about that when they are building those very fast in memory databases. You still have to manage it somehow in terms of what’s going in there and how they are going to keep it refreshed.

Jim Ericson: Yeah, speaking of data management I do want to point to another piece of technology that’s really greatly improved the bottleneck problem as well as the big data problem. That will be storage. Storage just keeps getting bigger in capacity. It gets smarter. We can do more processing down at the storage level without having to drag terabytes of data over the ethernet cable. It also gets cheaper in price as it gets better.

It's amazing. So one of the ways that we are finding sort of bottlenecks alleviated is that storage itself is fast to read and write data to it. I/O is not as bad as it used to be. Processing is closer to the data there and so forth. So I don’t know. are you guys saying storage has been a helpful thing to speed this up?

What Does "Speed of Thought" Mean for Analytics?

The phrase "speed of thought" in analytics refers to the ability of a data system to respond to user queries and interactions as quickly as the user can think of them. It represents a benchmark for usability, agility, and responsiveness in analytical tools—enabling users to explore data, generate insights, and iterate without delay. When systems operate at the speed of thought, they empower users to follow curiosity-driven paths, validate hypotheses instantly, and uncover patterns in real time.

This concept became widely recognized through innovations in business intelligence and in-memory computing, where traditional slow query processing gave way to instantaneous filtering, drilling, and visualizing. Modern tools that achieve speed of thought often rely on optimized data engines, high-performance caching, and intuitive, low-latency interfaces. The benefit is a seamless analytical experience where data exploration flows as naturally and rapidly as thinking itself.

In practical terms, analytics at the speed of thought allows business users to:

- Quickly pivot between different views of data without waiting on IT

- Test multiple what-if scenarios during meetings or live presentations

- Answer follow-up questions immediately as they arise

- Reduce cognitive friction and stay in a state of insight generation

Ultimately, achieving speed of thought in analytics is not just about technology performance—it's about empowering decision-makers to move faster, with more confidence, and with fewer barriers between their questions and the answers hidden in their data.