All the Different Ways to Analyze Data

Contents

Estimated Reading Time: 35 minutes

Key Takeaways From Data Analysis

This page willcover the definition of data analysis and also drill down into the applications of data-centric analysis. By understanding your data and making your it work for you, it's possible to transform raw data into positive actions that will take your organization to the next level. Here is a brief summary of all the different ways to analyze data and ultimately grow your business.

7 Different Ways to Analyze Data

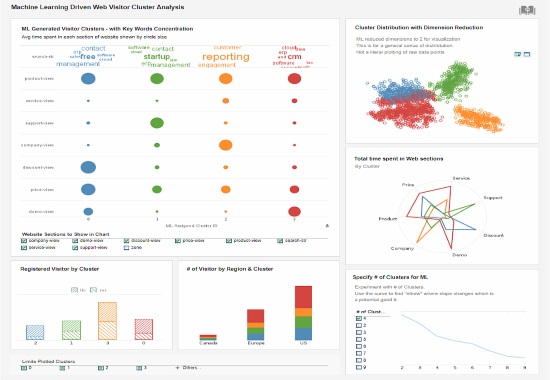

- Cluster analysis

- Cohort analysis

- Regression analysis

- Factor analysis

- Neural Networks

- Data Mining

- Text analysis

Top Data Analytics Techniques

- Collaborate to asses needs

- Establish questions

- Data democratization

- Data cleaning

- Determine KPIs

- Omit unnecessary data

- Construct a data management roadmap

- Integrate technology

- Answer questions

- Visualize the data

- Interpret the data

- Considering autonomous technology

- Build a narrative

- Sharing the load

- Robust Analytics tools

We are living in a time of ubiquitous data. These days, you can't run a business without some knowledge and understanding of data analysis.

From global multinationals to small businesses, every kind of organization has data that at a minimum needs to be monitored, and in many cases analyzed thoroughly, to ensure that affairs are managed properly.

Having the knowledge of how to properly analyze and glean insights from your organization's data is more important than ever, and can make or break you relative to competitors.

But while everyone has data, most are barely scraping by when it comes to making use of it. If you feel that you're not doing all that you can with your data, you're not alone. Most managers and business users know that greater direction could be gleaned from company data, but don't know to do so.

Data Analysis and Data Reporting Defined

Data analysis is the process of evaluating data and drawing conclusions. Data analysis is standard for any business, but it's important that it's done correctly so that internal issues can be identified and quickly resolved.

This is where data reporting is useful. Data reporting is the process of collecting data and manipulating it to make it clearer for the audience that is analyzing it. Data reporting is an essential aspect of data analysis.

To understand the distinction better, see this page on data analysis reporting.

Two Kinds of Data Analysis Tools

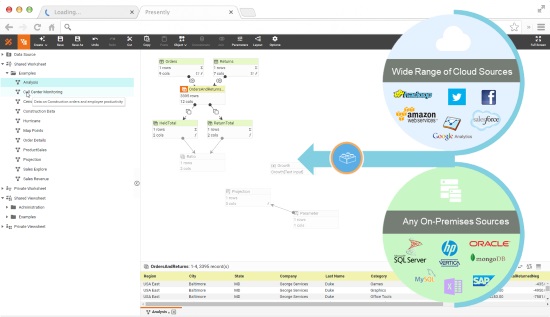

While there is a large selection of data analysis technologies available, these tools can be separated into two fundamental categories: ones based on hardware architecture, and ones based on software architecture. Hardware solutions are disk-based, whereas software solutions are memory-based. One type is not better or worse, as it depends on your organizations requirements. There are also hybrid solutions which combine both of these techniques.

A disk-based solution is usually much more powerful, and is used with a large data profile, involving terabytes of raw data, and the need for aggregation to take advantage of cubes and summarization. In most cases the disk based solution requires some kind of data modeling, so that you can summarize and create aggregations. The downside of disk-based solutions are a lack of flexibility in analysis and aggregation.. Disk based solutions are typically powered by an OLAP engine.

In an in memory-based environment, data access is much faster, because the information is cached and available through a fast I/O exchange, which offers a very fast, speed-of-thought, kind of interactivity with the data. However, in-memory only solutions are often limited to department-sized datasets of up to a terabyte. For this reason, they are insufficient for a large scale enterprise analytical environment.

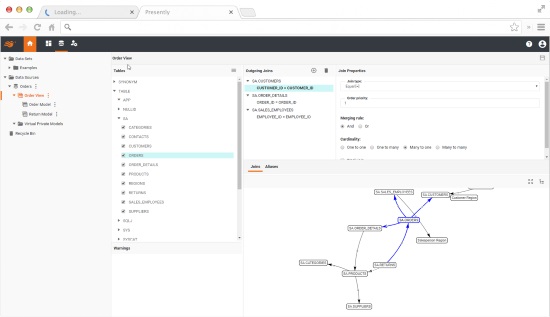

In-memory data modeling can be done via a graphical user interface on the fly, whereas with a disk-based solution tends to require more data summarization to make the environment work properly.

Another feature of some in-memory solutions is associative indexing done at the user level, allowing you to assign a relationship between tables with a drag and drop tool, pulling data from relational or non-relational data sources, including flat files, spreadsheets, or almost any type of data source.

This is another common advantage of an in-memory BI solution. Read more about types of data analysis technologies here.

The Impact of Data Analysis

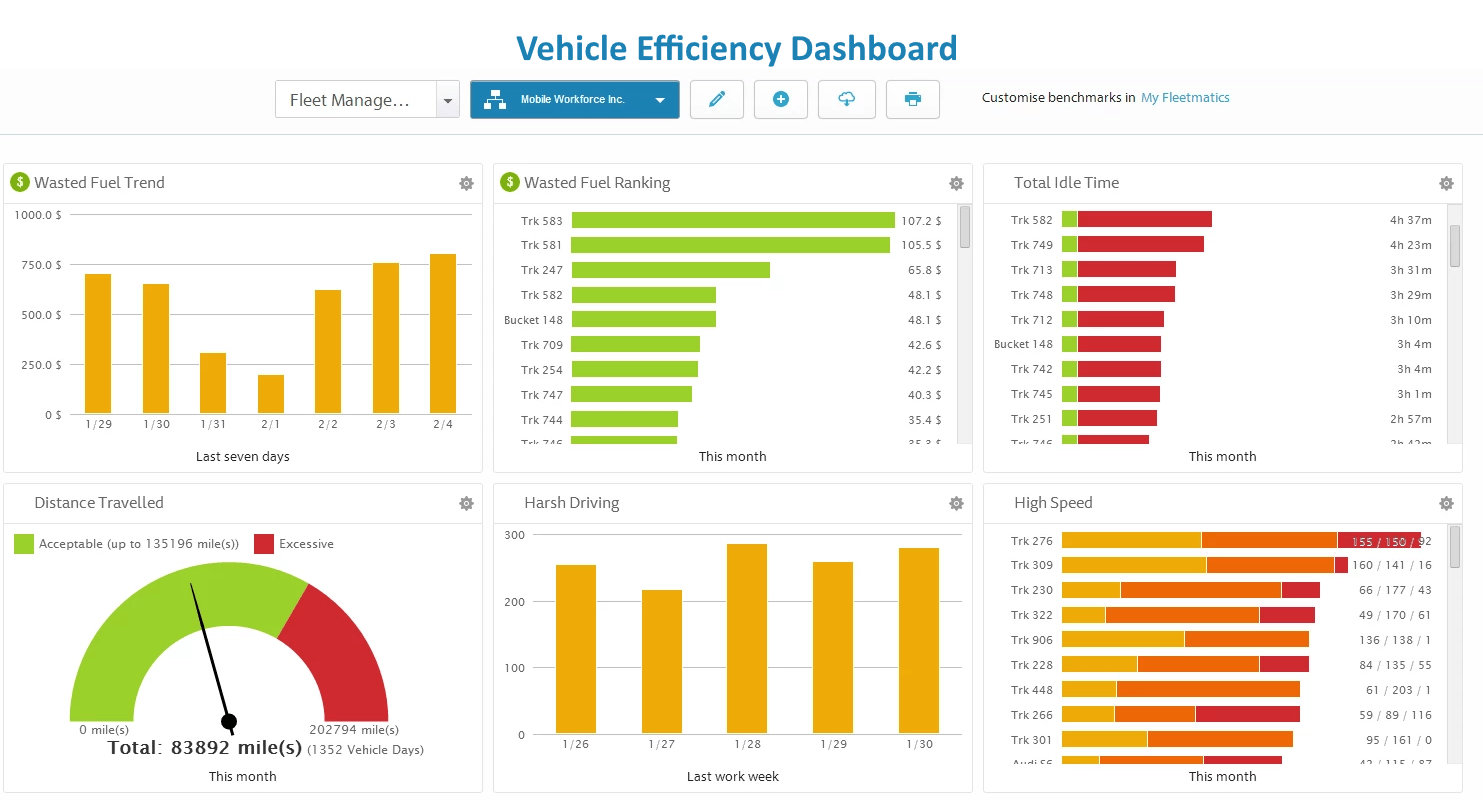

Analyzing data can make a huge difference for your organization. It used to be the case that only businesses with large inventories or many employees would have enough data to require data analysis tools. But these days with so much more business functions done in the business space, such as accounting, payroll, web analytics, and budgeting, even small business owners have large volumes of data that can be leveraged for better management decisions.

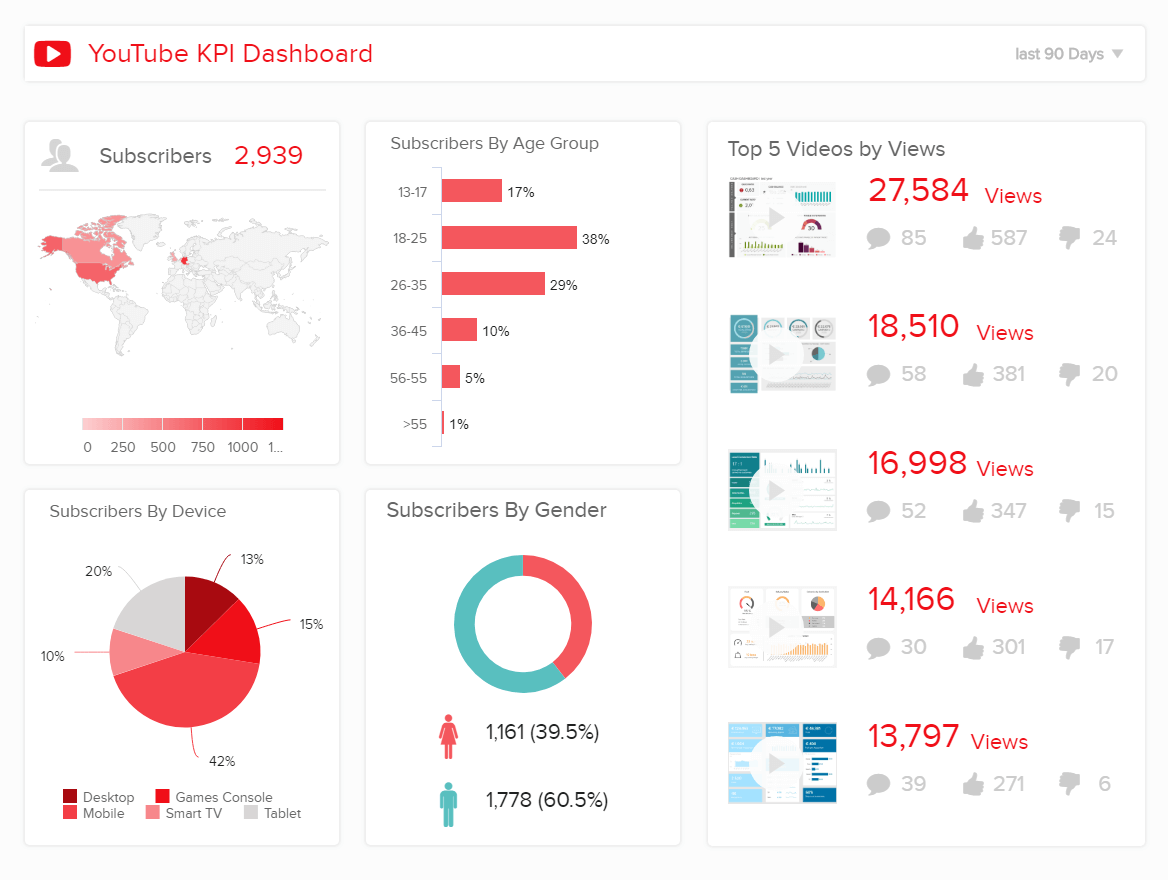

As every business has a website, web analytics data can help you understand your potential customers better, by showing you which types of pages are more compelling, and what kinds of materials will bring them to and keep them on the site.

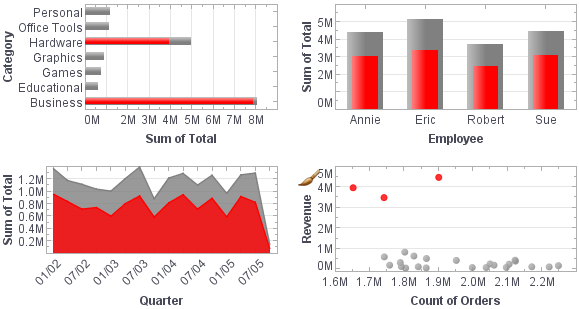

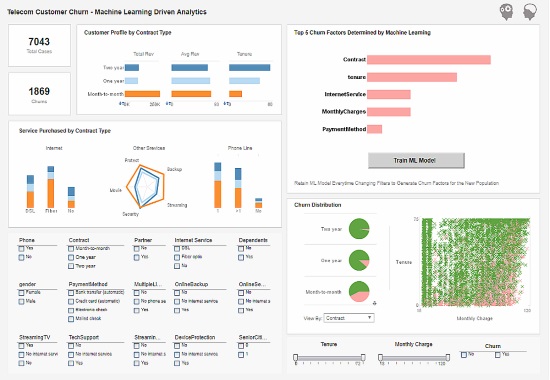

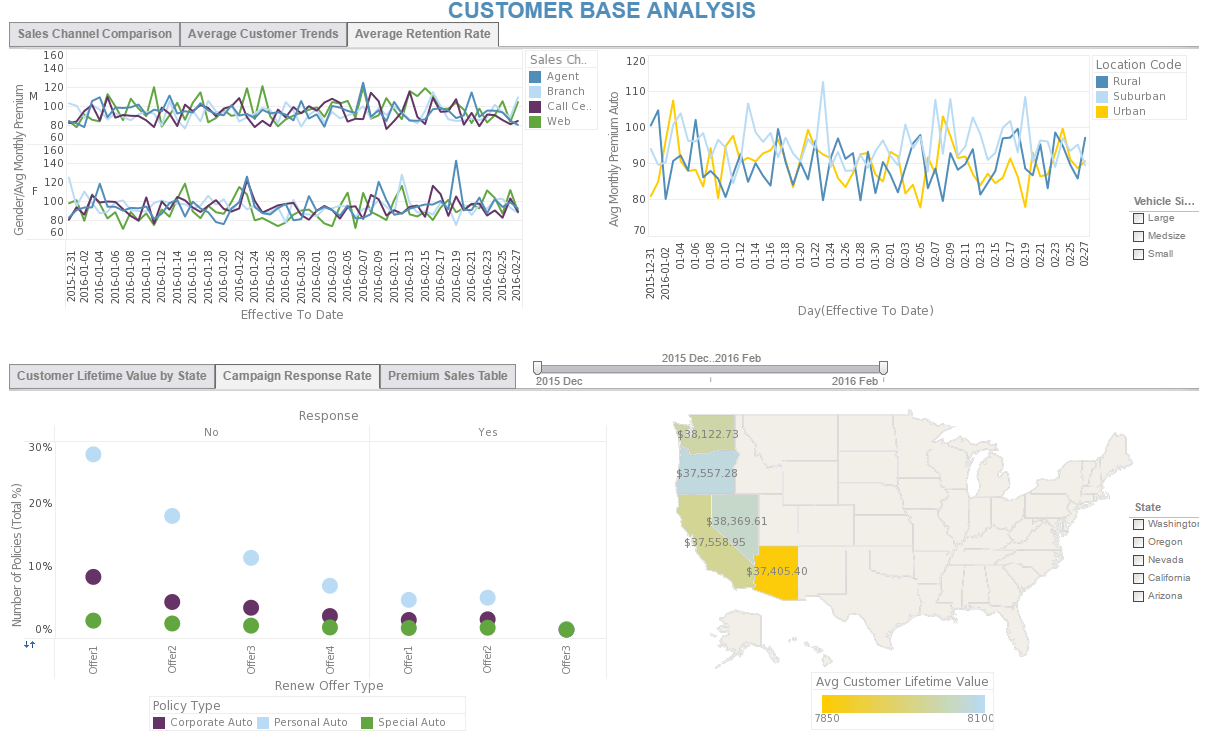

Customers are the most important element in any business. By using data analytics to get a new perspective on your customers, you can better understand their demographics, habits, purchasing behaviors, and interests.

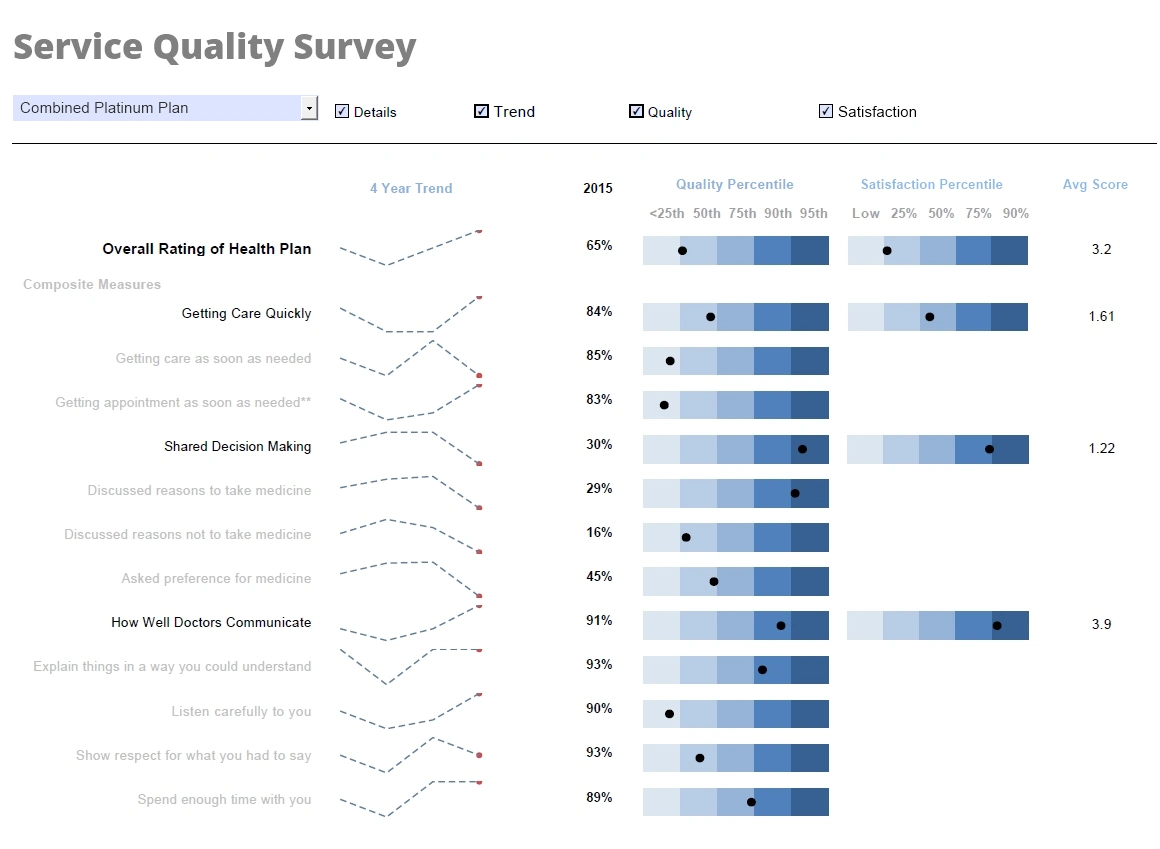

In the long run, adopting an effective analytics strategy will increase the success of marketing, helping you target new potential customers, and also avoid targeting the wrong audience with the wrong message. Customer satisfaction can also be tracked by analyzing reviews and customer service performance.

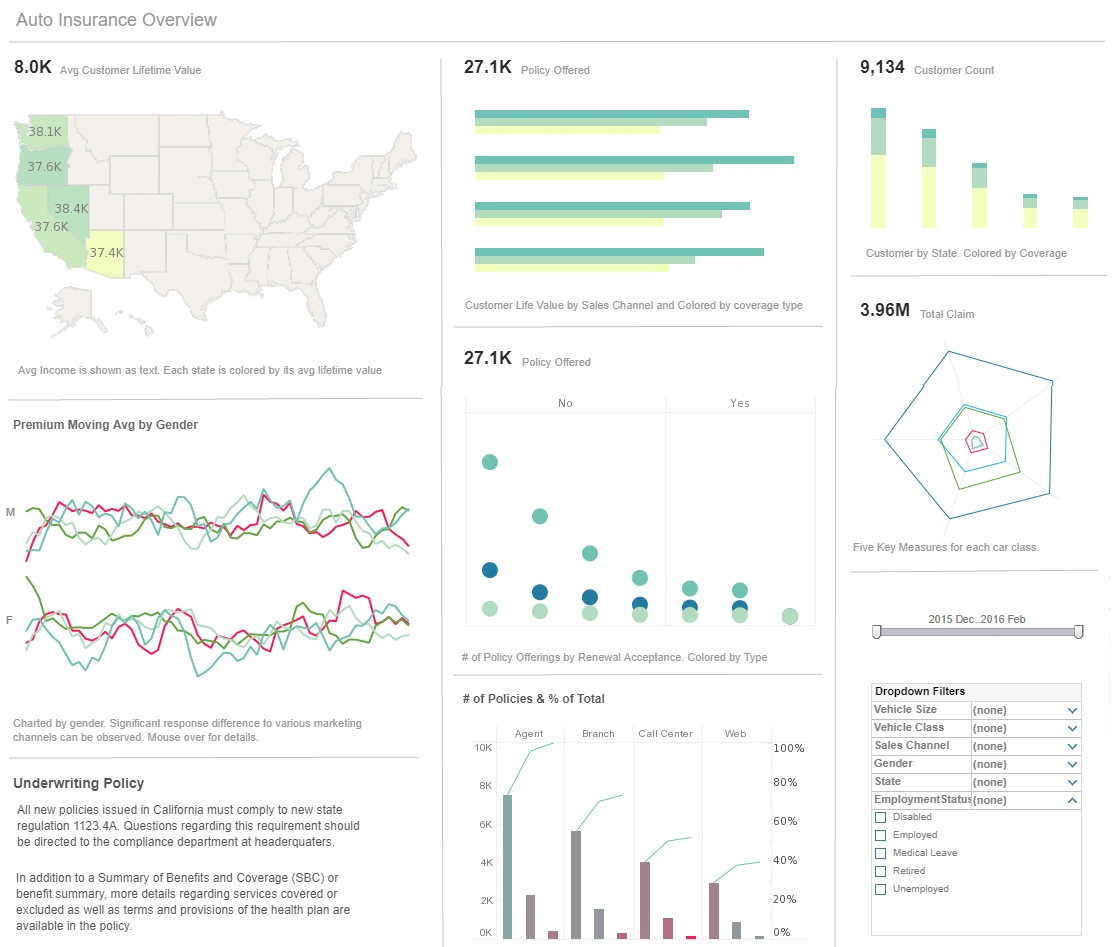

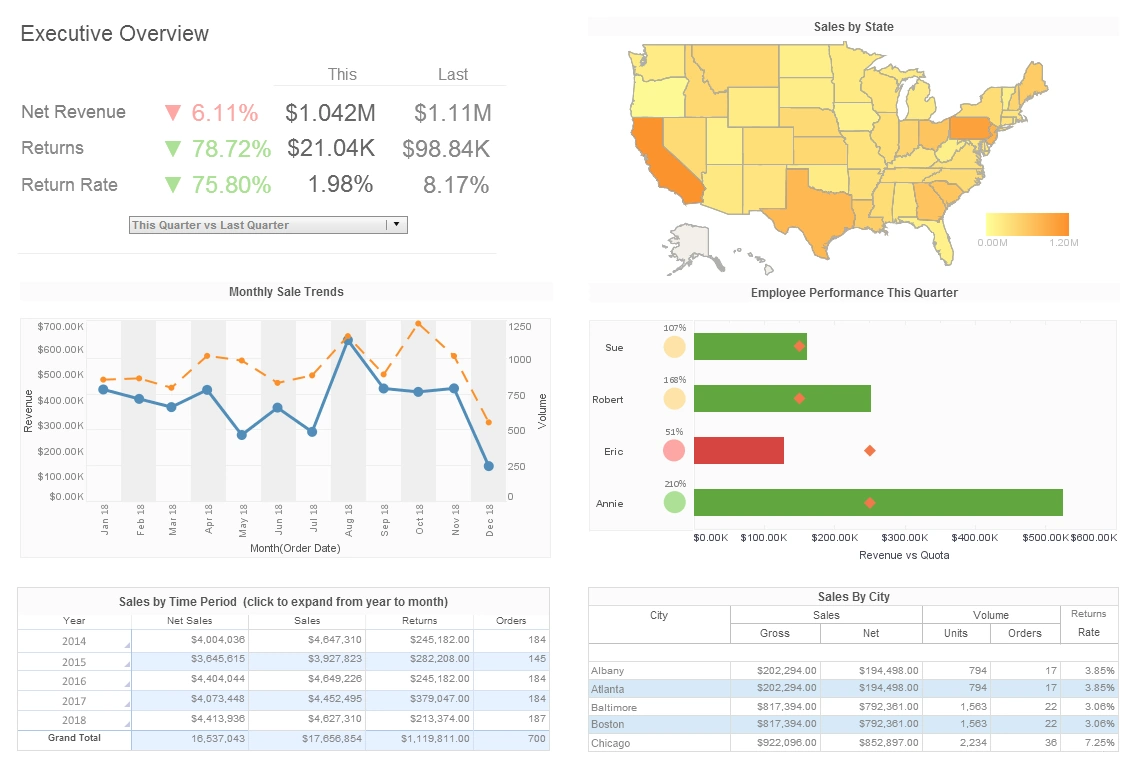

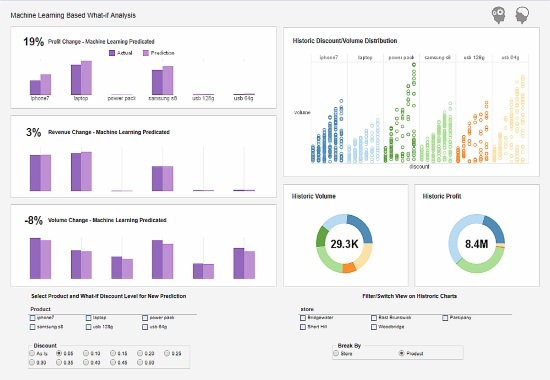

Managers can also benefit from data analysis, as it encourages business decisions to be made on facts and not simple intuition. With analytics, you can understand where to invest your capital, detect growth opportunities, predict your incomes, and tackle uncommon situations before they become problems. Data from your payroll can help you understand what has the biggest cost impact on your business and help you evaluate which departments produce the biggest return on your investment.

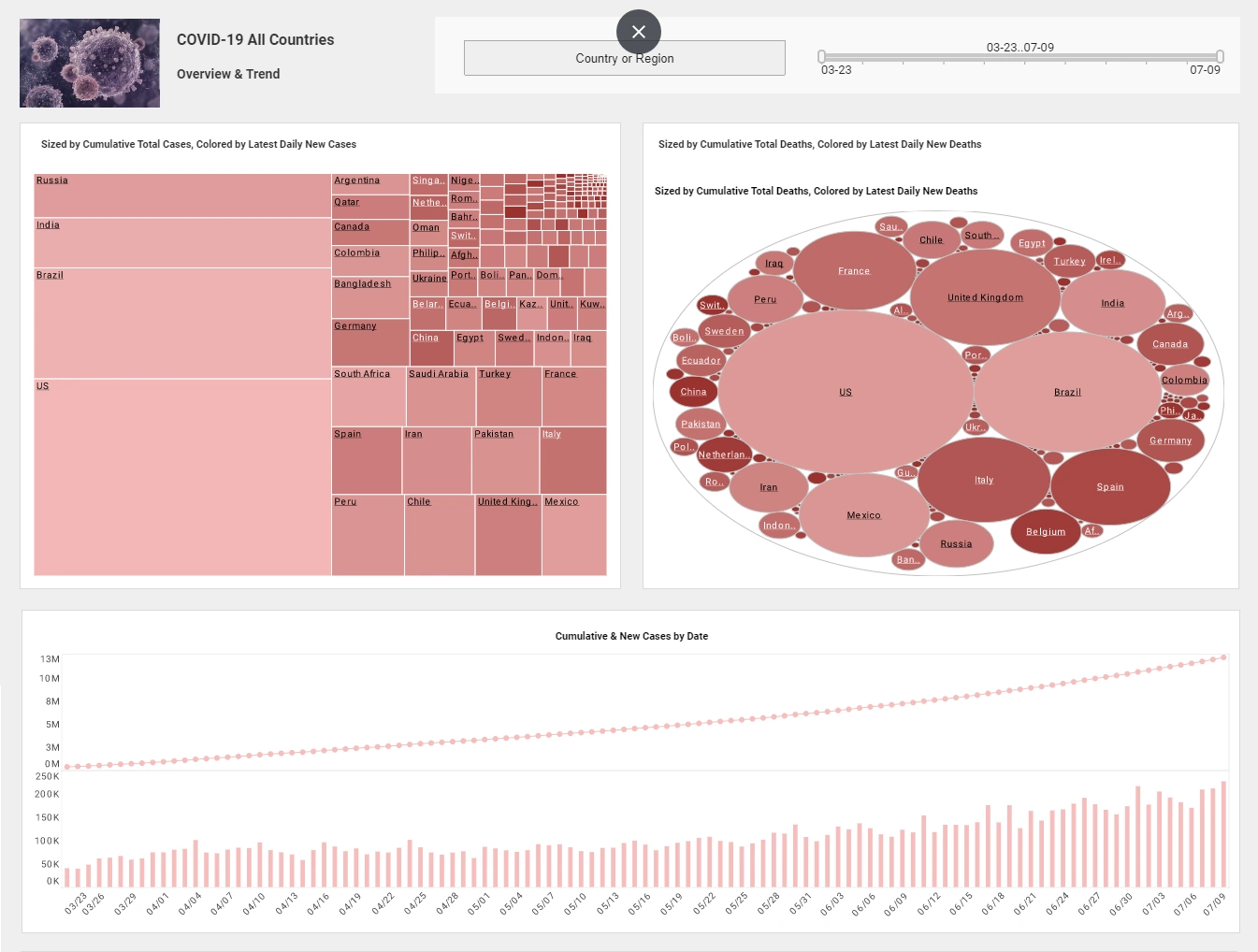

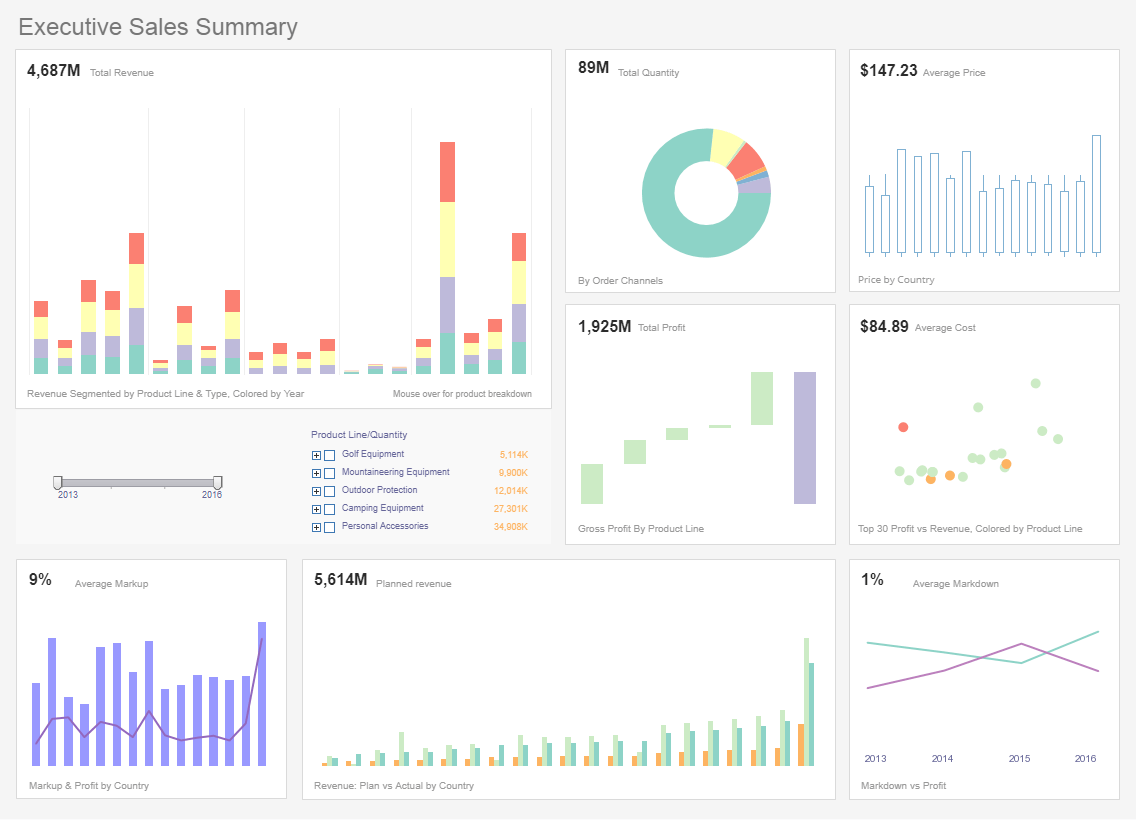

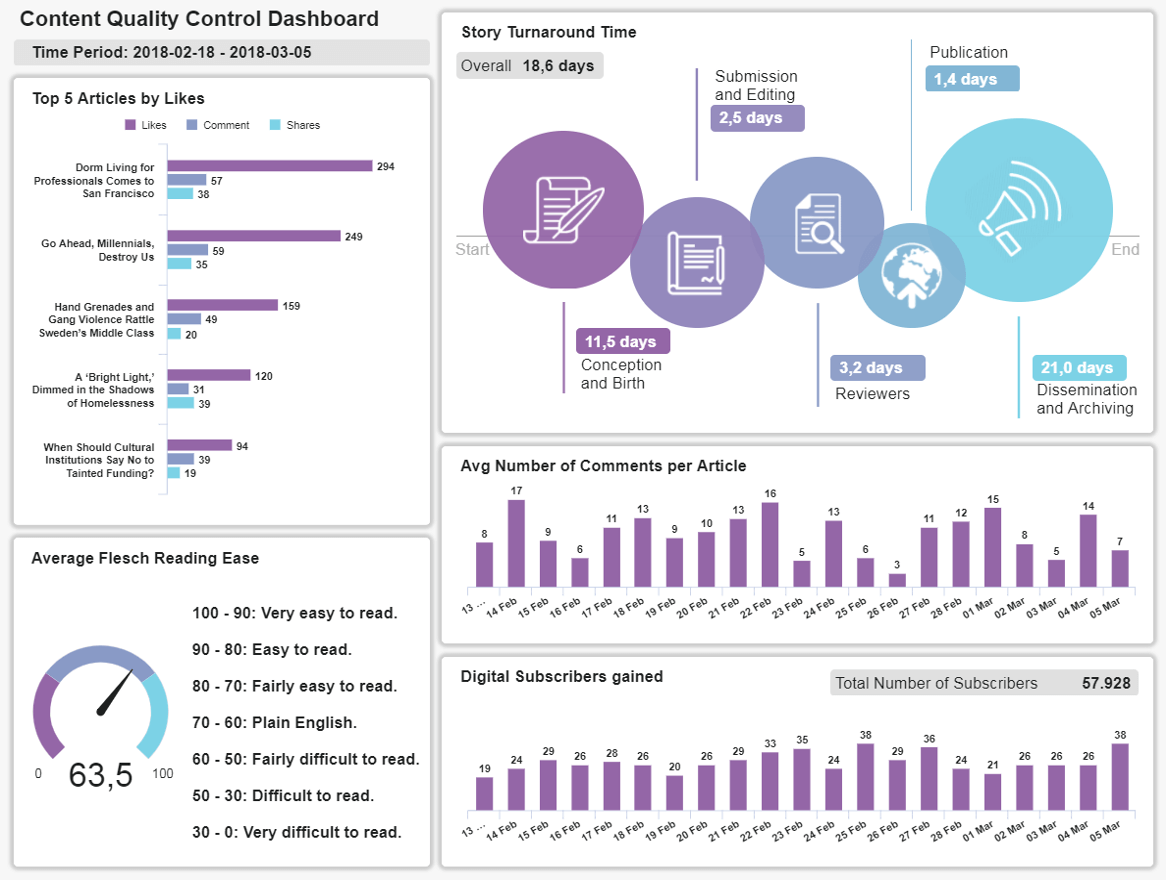

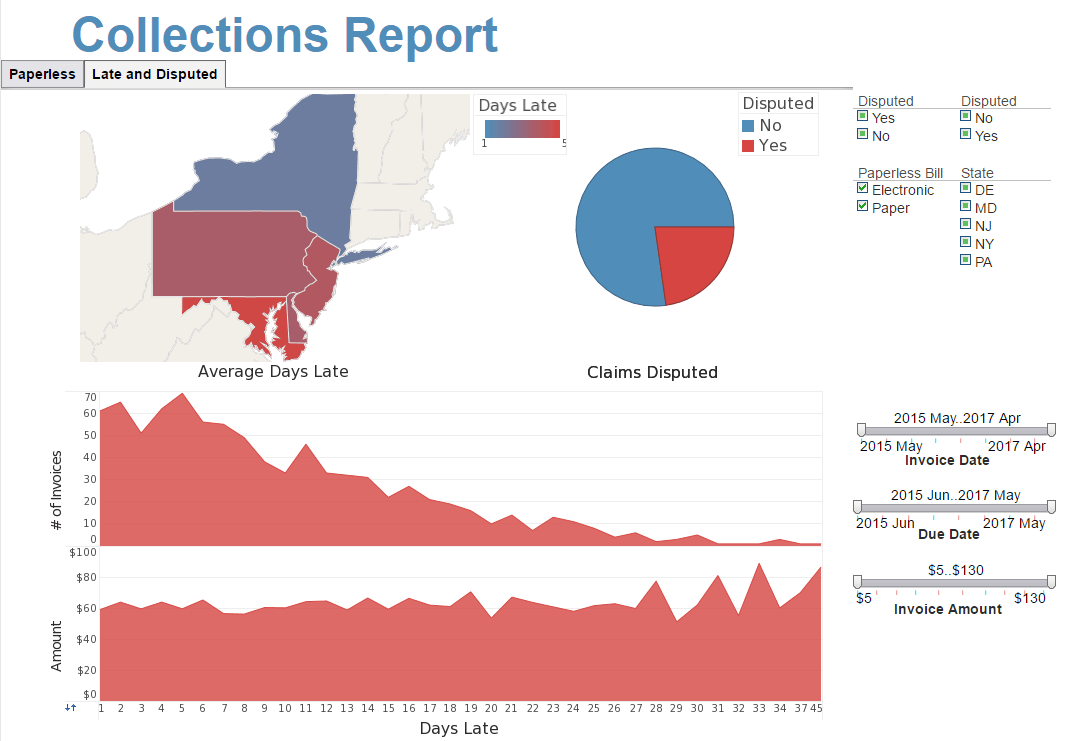

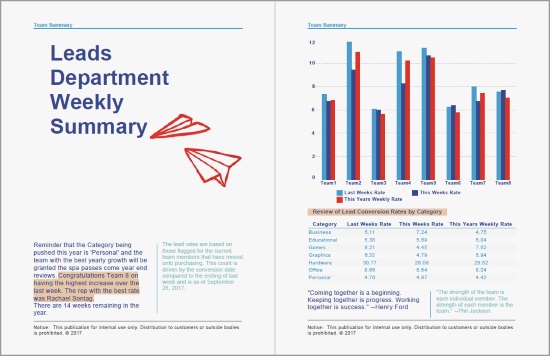

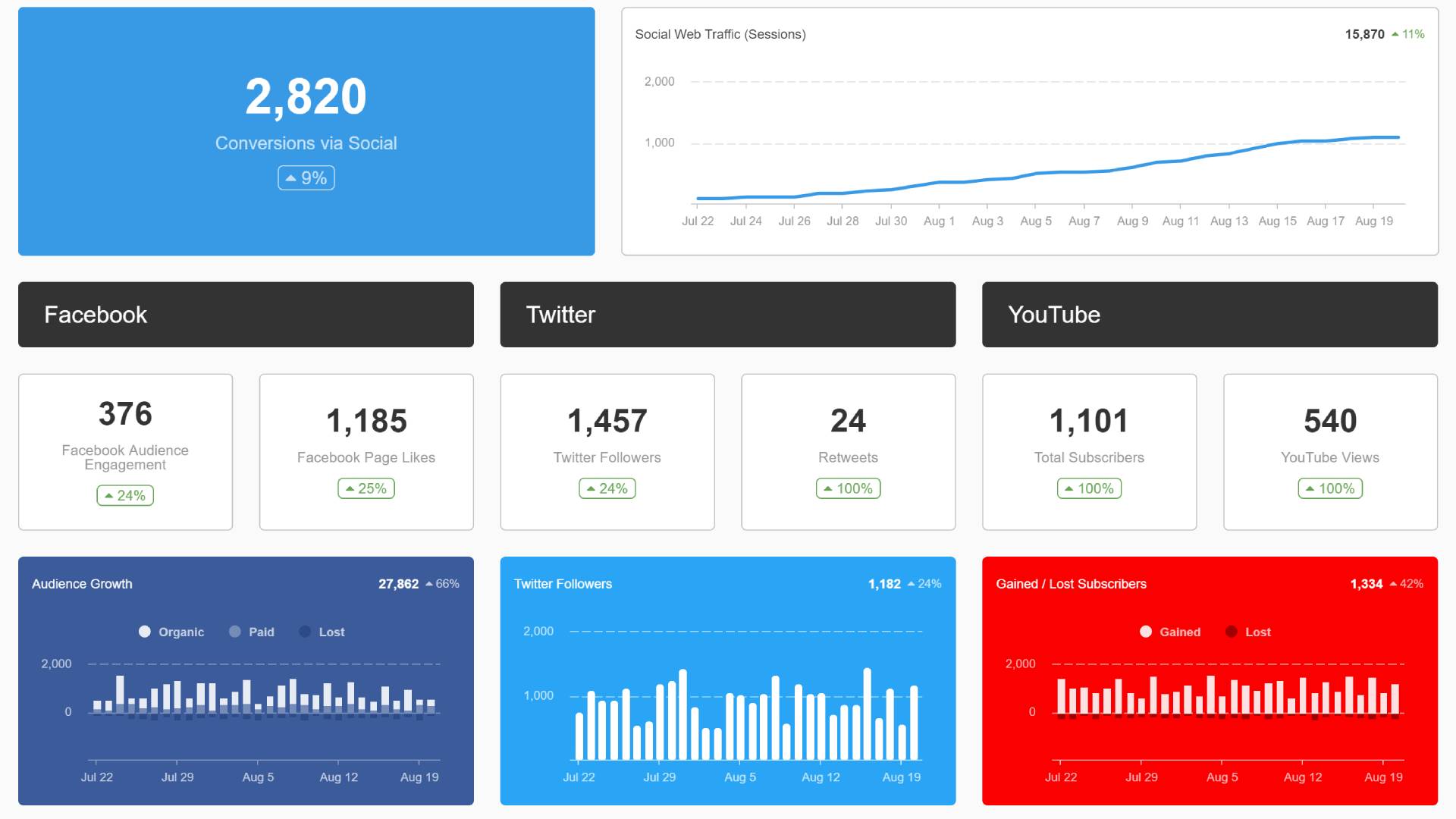

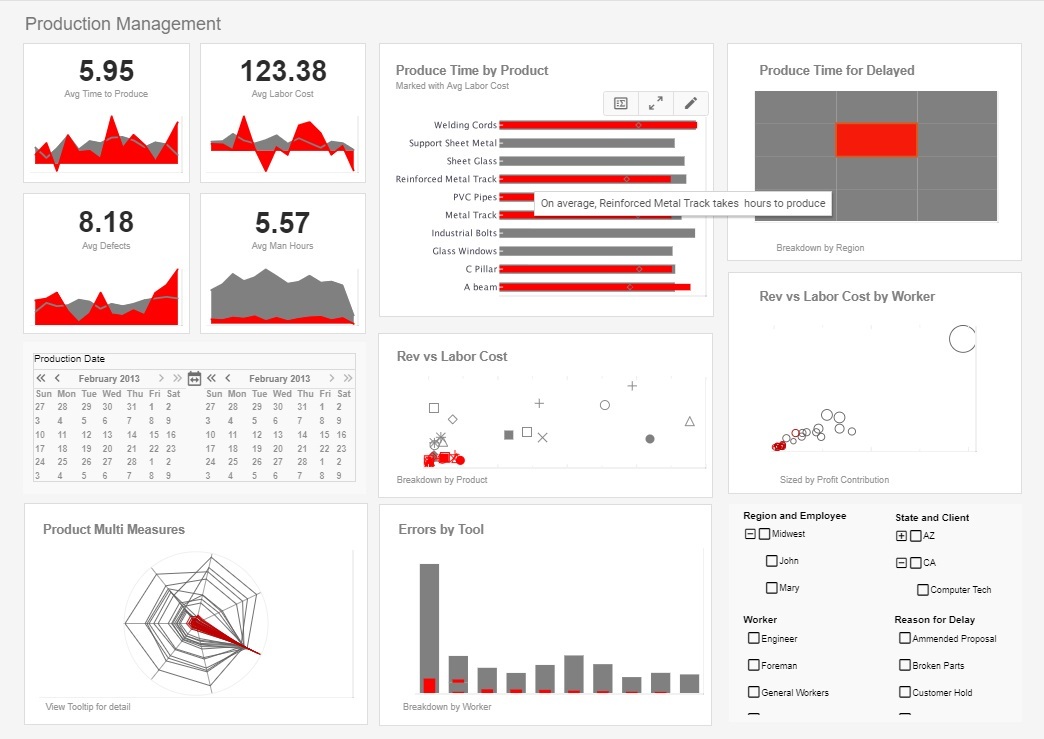

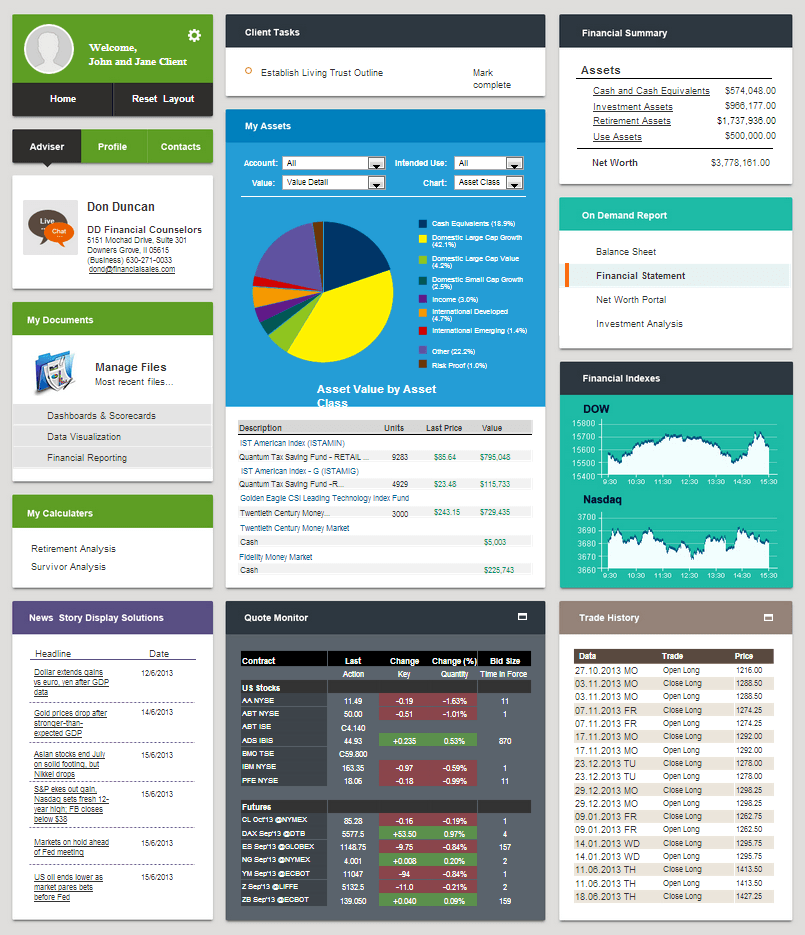

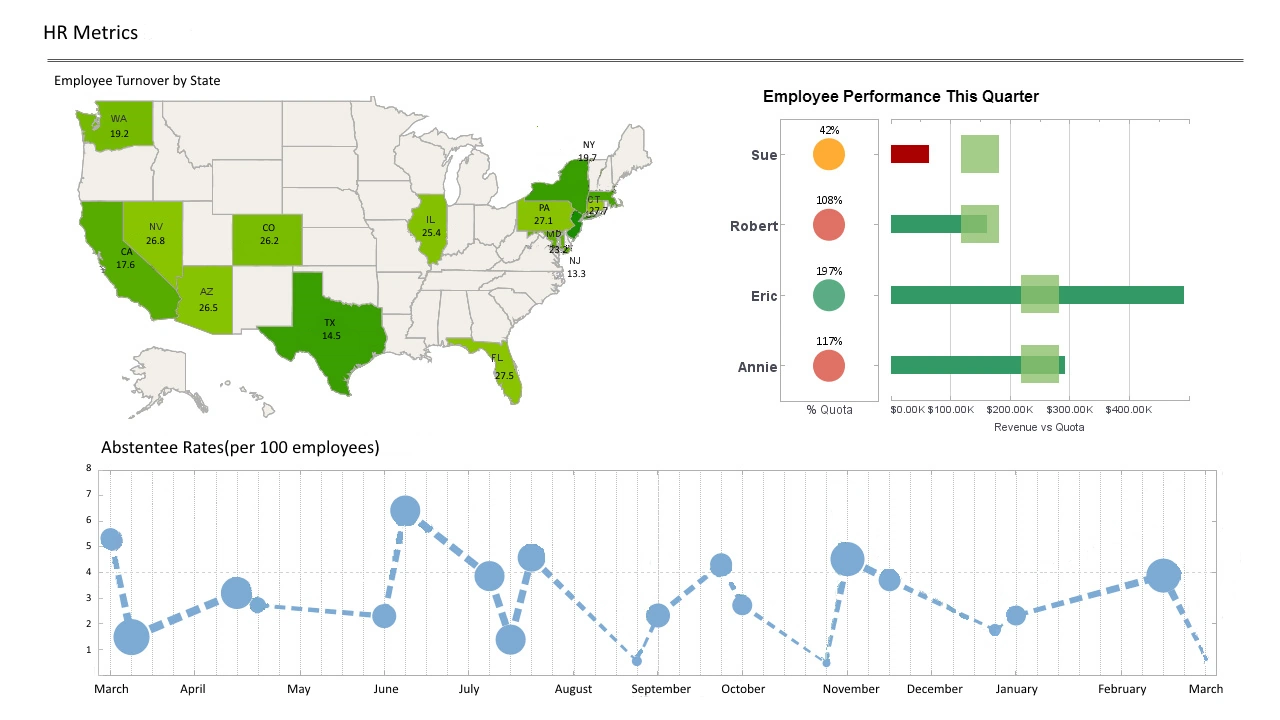

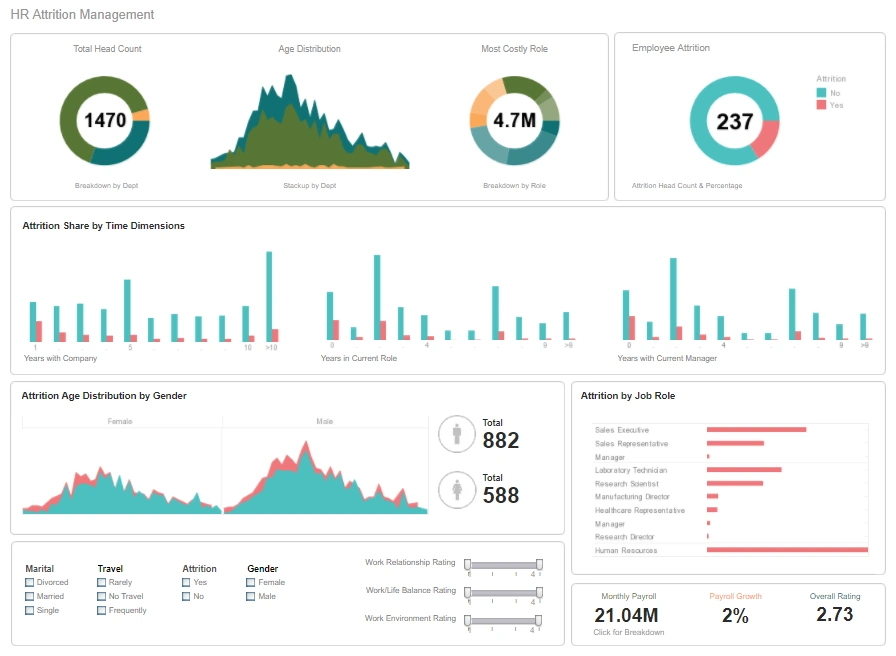

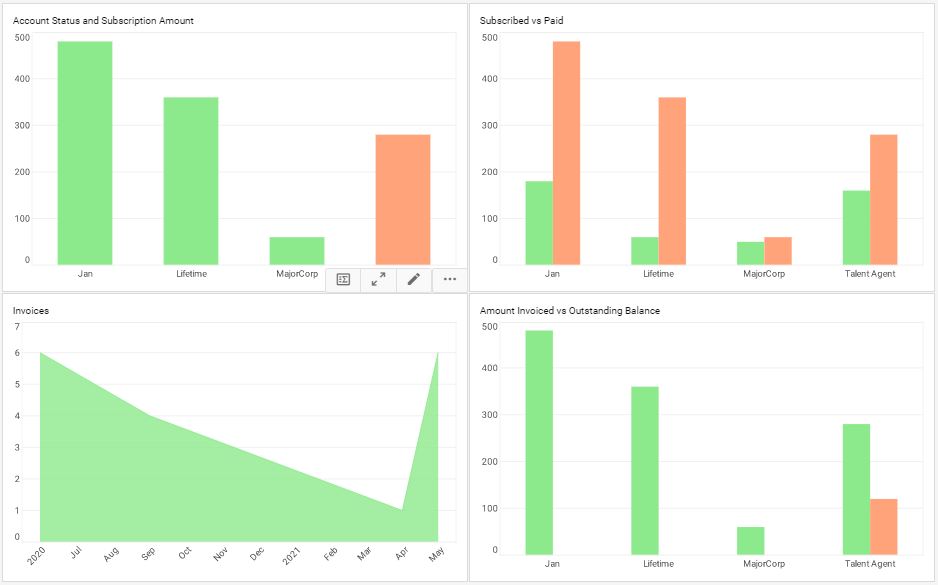

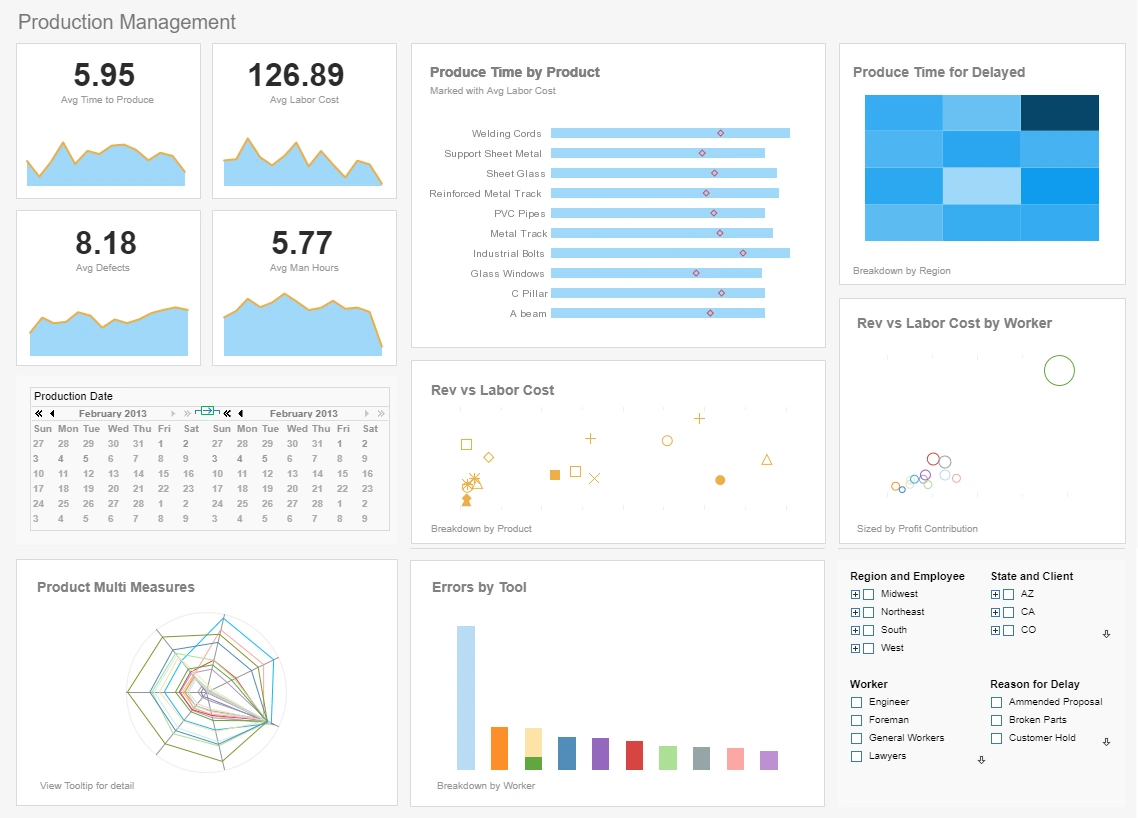

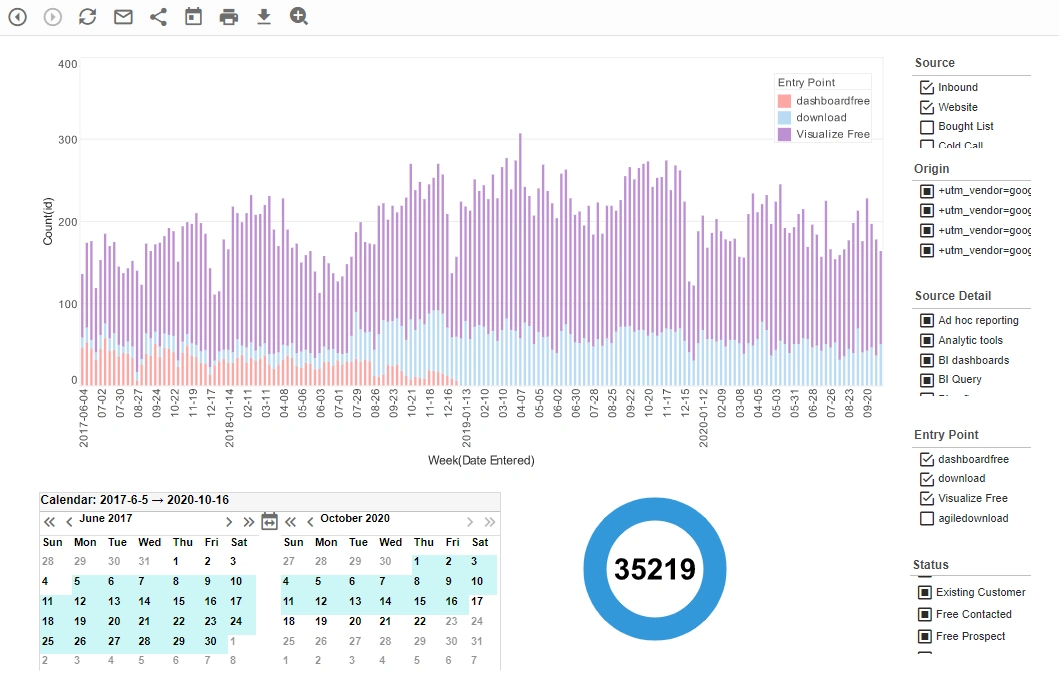

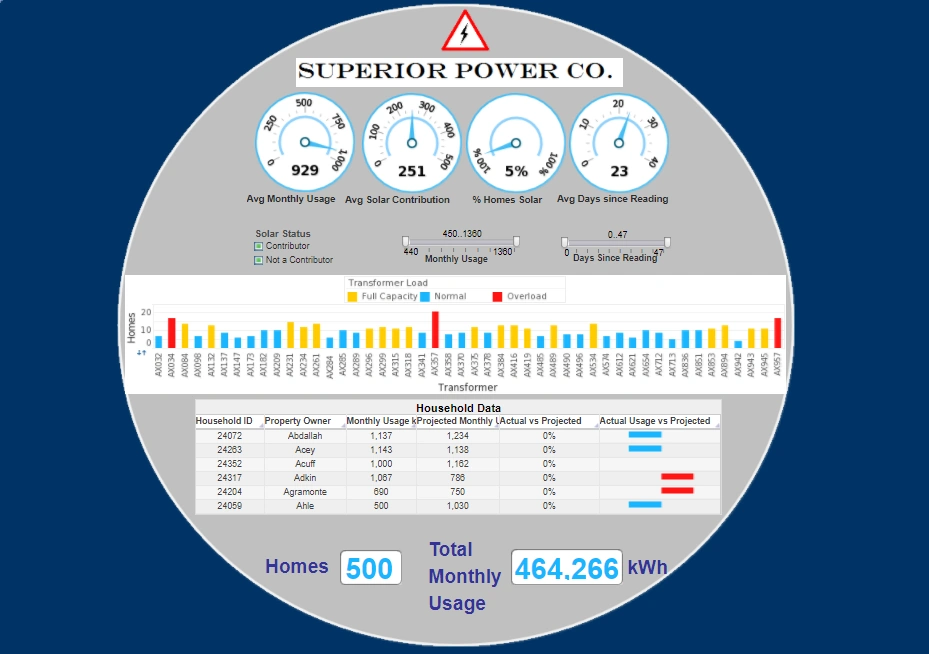

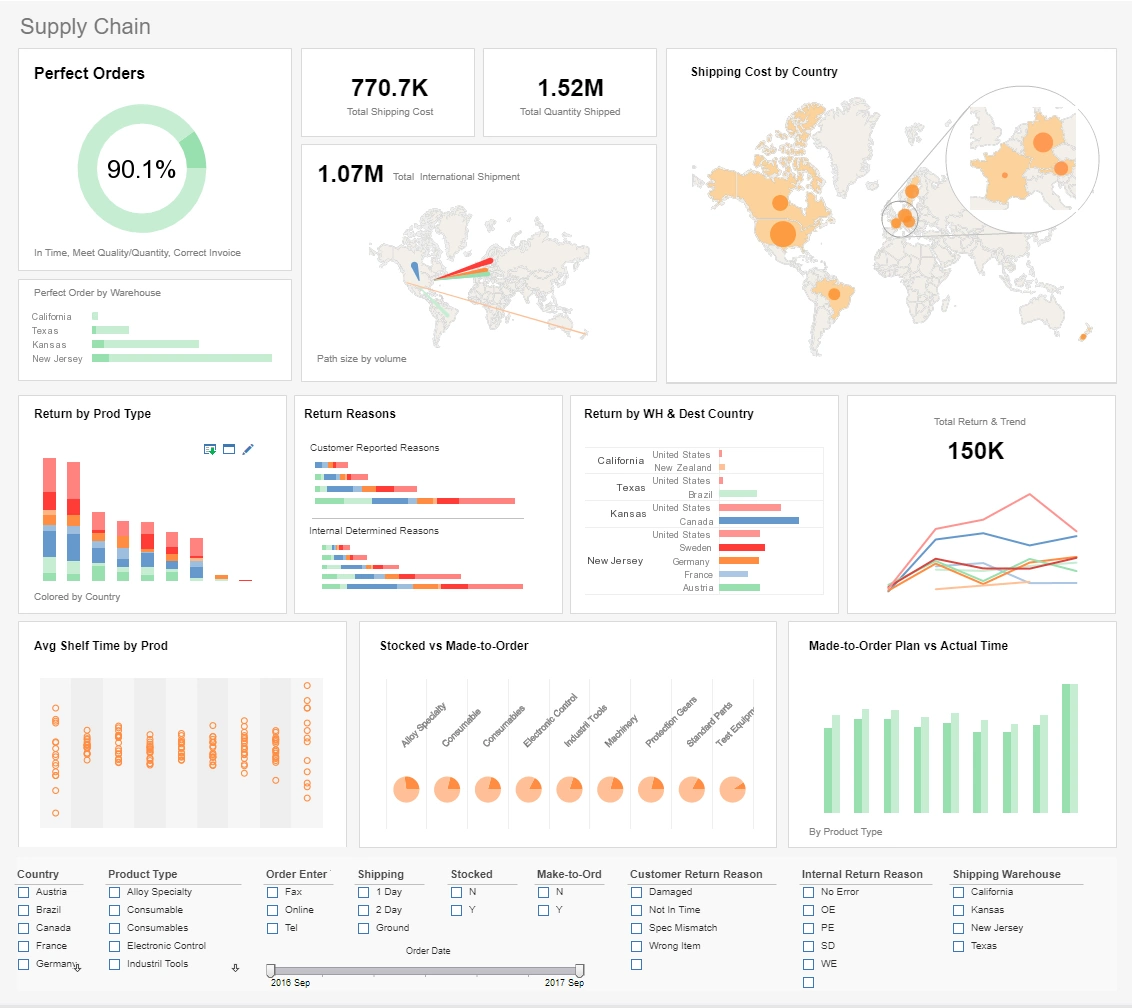

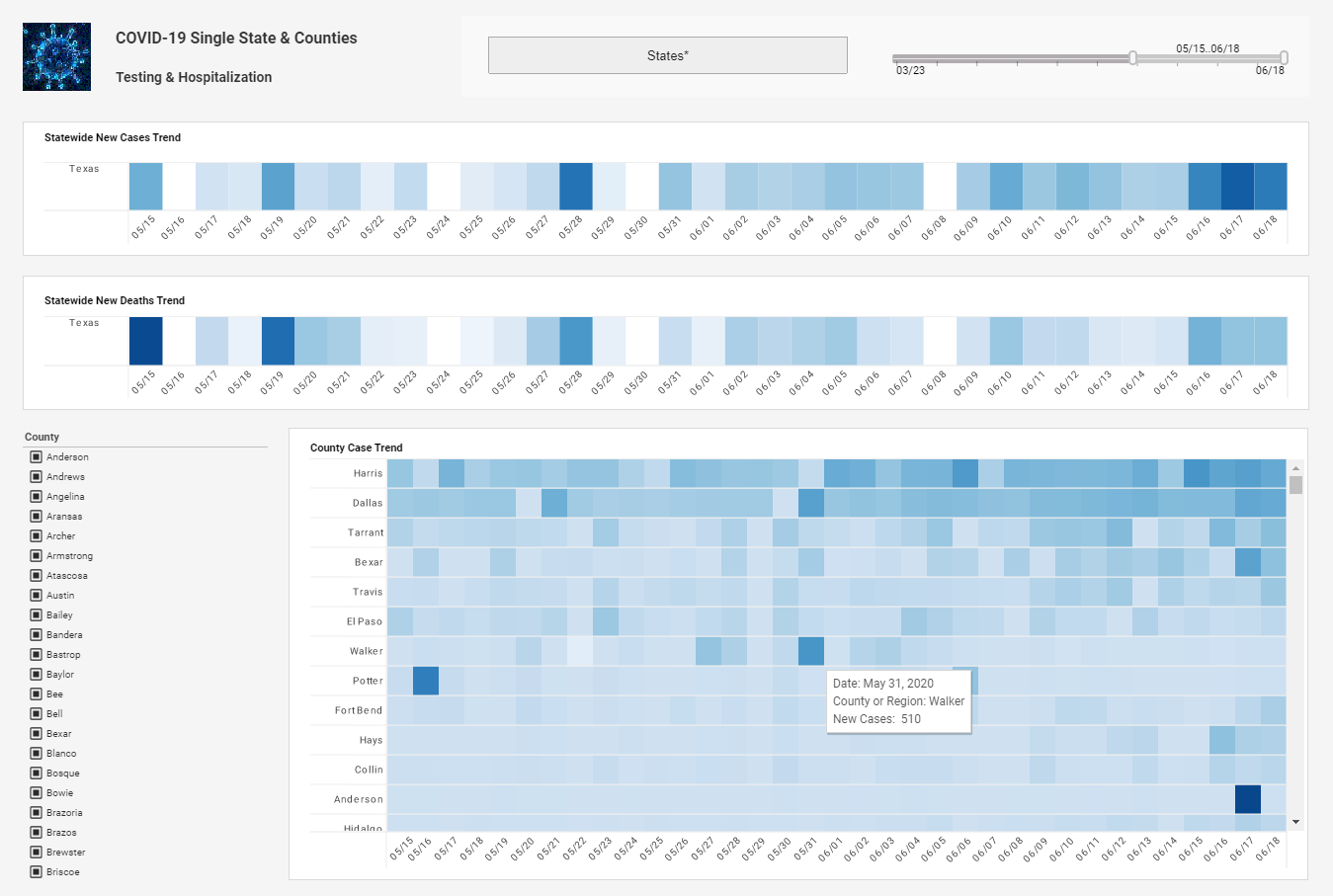

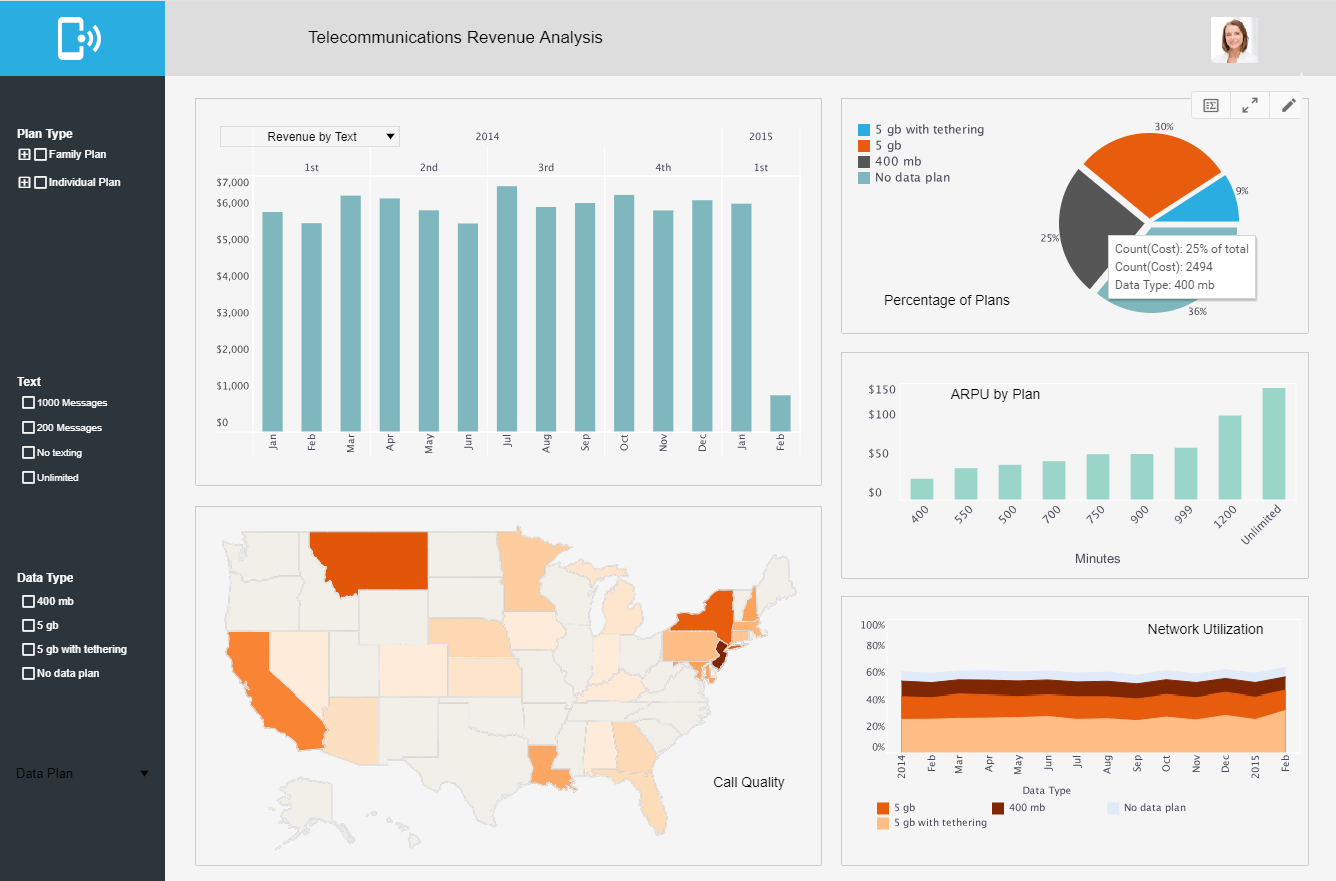

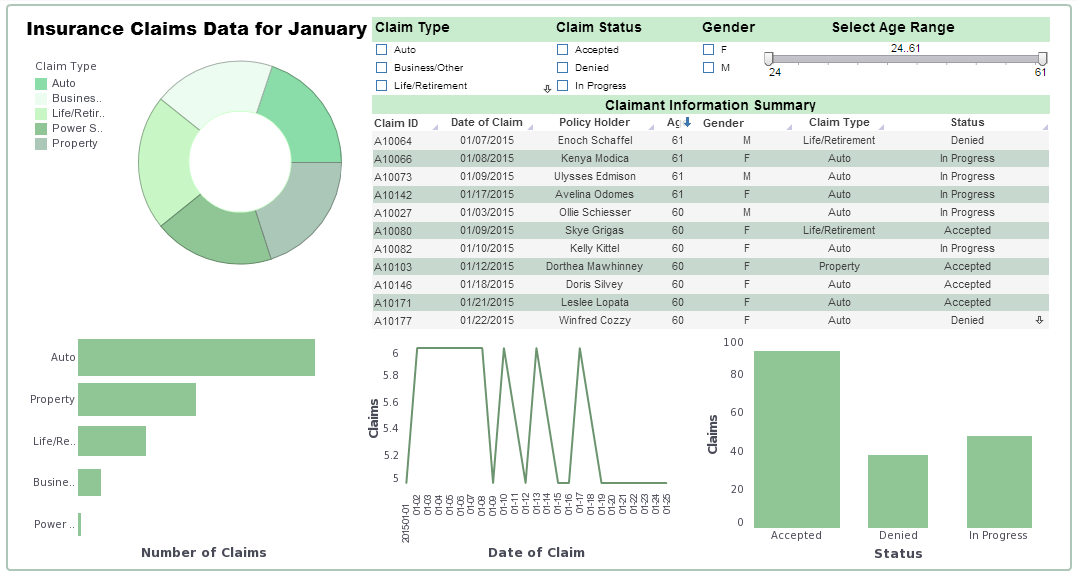

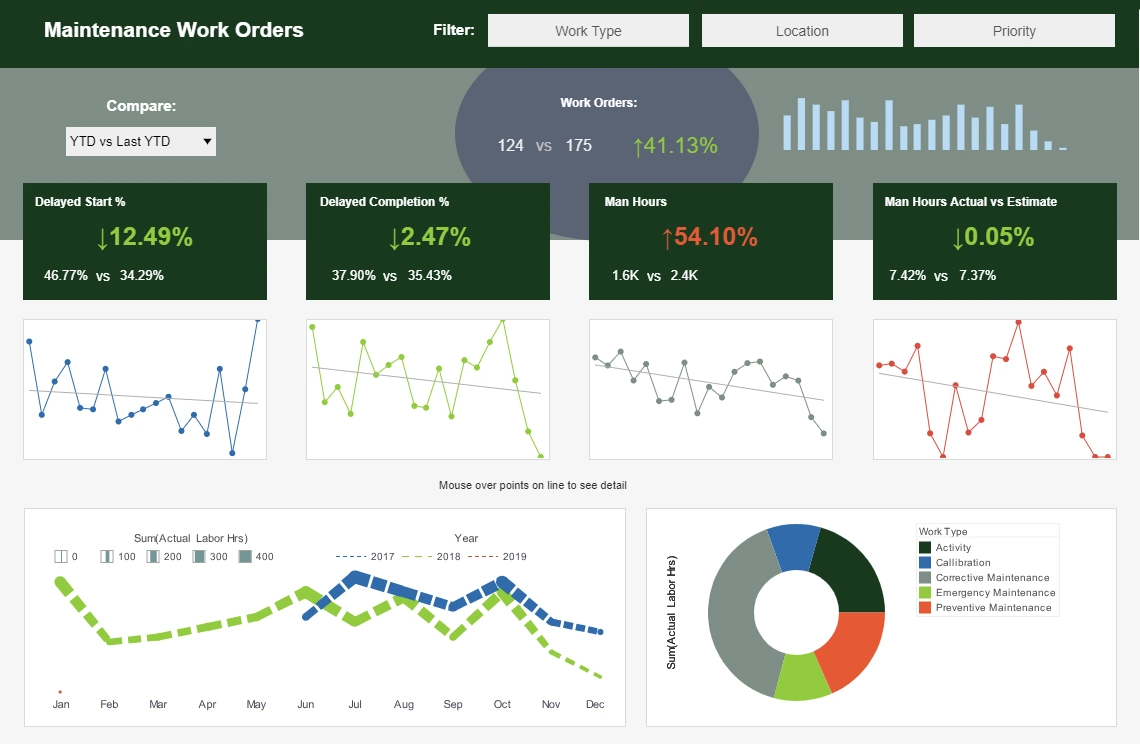

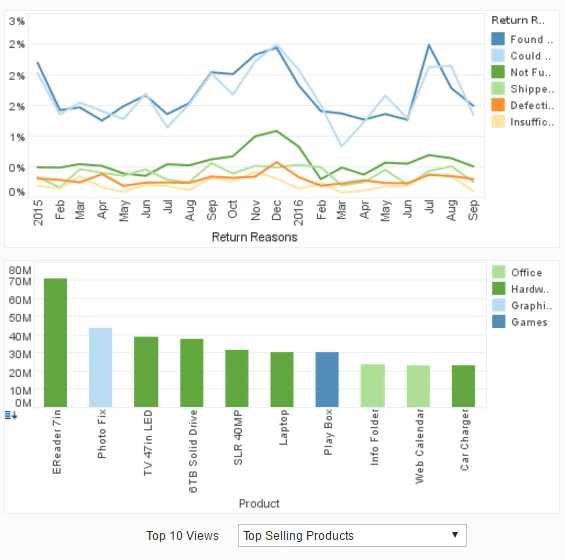

These are just a few examples of how relevant information can be gained from data analysis performed in all areas in your organization. With the aid of an effective dashboard software, you can present these data insights in a professional and interactive way.

Types of Analytics

Before going into the different ways of analyzing data, it is important to understand the fundamental types of analytics. The most common types are descriptive and prescriptive, but there are other categories as well.

As more varieties of data analysis are understood, more value and actionable insights are brought to an organization.

Descriptive Analytics

Describing the past

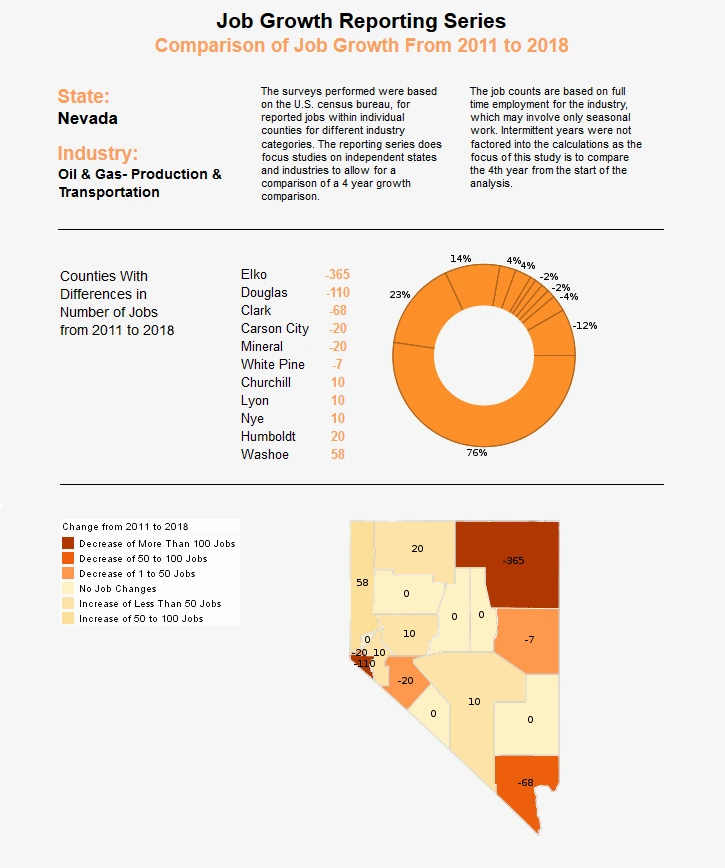

Descriptive analytics is where any analysis of data begins, with the use of data to describe what has already happened. This is done by ordering, manipulating, and interpreting data, possibly from a plethora of different sources, to glean a valuable understanding of what has been going on in an organization.

Descriptive analytics are essential for the meaningful presentation of data. And although descriptive analytics do not predict future outcomes or explore causes, they lay the groundwork for these more advanced types of analysis.

Exploratory Analytics

Exploring data relationships

Exploratory analytics explore the relationship between data and variables. Exploratory analytics uncovers connections, helping you generate hypotheses and begin solving problems. Data mining is a common application of exploratory analytics.

Diagnostic Analytics

Exploring causes

Diagnostic analysis is one of the most powerful kinds of analytics. This kind of analysis goes beyond simple description to provide understanding as to why and how things happened the way that they did. This kind of understanding is often the primary goal of an organizations analytics strategy and its value cannot be understated.

Diagnostic analytics provide actionable insights in response to specific business questions. It is the foremost style of analytics used in scientific research, and is also a vital aspect of analytics in retail.

Predictive Analytics

Deducing future performance

Predictive analytics attempts to look into the future and predict what will happen. It does this by building on descriptive, exploratory, and diagnostic analytics, as well as artificial intelligence and machine learning algorithms. When used properly, predictive analytics can predict future trends, as well as give advanced warnings on problems and future inefficiencies, helping to prevent losses before they happen.

Effective predictive analytics lead to organizational changes that not only streamline operations but also keep ahead of competitors. Doing descriptive and diagnostic analytics properly is essential for predictive analytics, as understanding of what has happened and why is necessary to properly predict how business conditions will unfold.

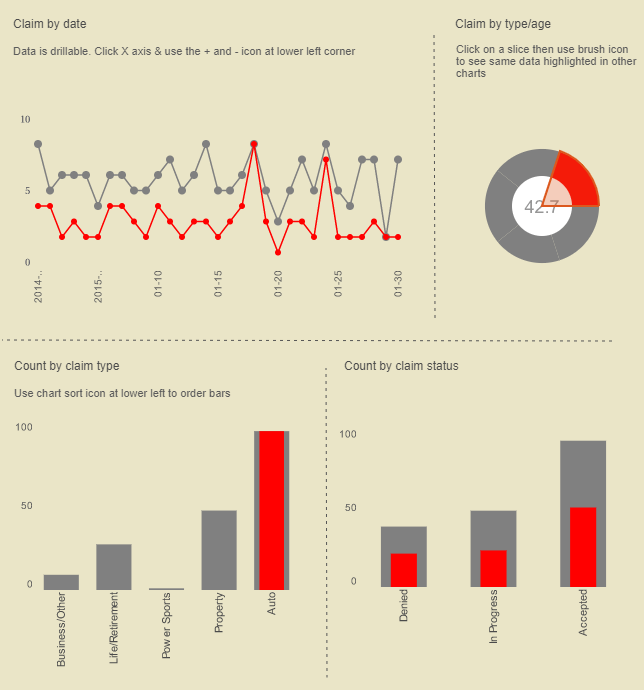

One example of predictive analytics at work is in detecting fraudulent insurance claims, which are disturbingly common. Fortunately predictive analytics can predict a customer's propensity to take a particular type of behavior during, the underwriting process. Ultimately of course, the customer's behavior may be based on the context of the situation. Maybe their financial need might change between the point of buying a policy and submitting the claim, for example.

Market conditions might change in the performance of the insurer at the point of claim and might influence how the customer penalizes the insurance company. For example, if an insurance company performs particularly badly at the point of claim., then the customer may well penalize them in some way.

Very interesting metrics are emerging. It is important also that we take into account the policy position. For example, if a customer was declined coverage on the basis of something they may or may not do, then they have an obligation to declare that insurance had been declined to all future insurers.

Fraud analytics help predict policy holder fraud, but there are also quite big issues around supplier fraud, which is generally referred to as claims leakages. Predictive analytics has a major part to play in how companies manage their supply chain and optimize it, ensuring insurers pay no more than the absolute requirement.

Predictive analytics can guess these outputs that are being generated either around historical data or the actual data that is being given to the call center agent. The technology guess these outputs back to the agent in real time to make a difference at that inflection point based on APIs integrated within their system to ensure that the scoring mechanisms are relayed back to the contact management system in a real time fashion. They can be generally relatively easy to develop, and it just takes the right people and the proper building blocks to be able to get the output at one end, to the presentation of those outputs and on the screen for the agent on the other end.

To learn more about how predictive analytics can be used in the insurance industry, see this article.