Self-service Reporting Environments

This is the continuation of the transcript of the DM Radio show "Avoiding Bottlenecks and Hurdles in Data Delivery". InetSoft's Principal Technologist, Alicia Ivancovich, joined industry analysts and other data management software vendors for a discussion about current issues and solutions for information management.

David Inbar: Absolutely, and so the underlying feeds here, behind that, expanding the range and volume and granularity of data that you are looking at with your computer horsepower, and also thinking about, I will call it data timeliness or maybe data shelf life.

That’s the time from the instant when data theoretically becomes available and when you can actually get it to the point where it could be actionable or insightful for your users.

That becomes a significant computer problem in today’s environment and certainly one that’s not only a technical challenge but also an economic challenge. So, we will bring in the money factor here, I am sure in these discussions at some point and stop to look at what does it cost you to, as you say manage the life cycle of data that is useful to you, but granularity in details is certainly a big part of it.

Data Governance and Management

Jim Ericson: You know David, this is Jim, and that’s an interesting point because it might be that we are hitting kind of a blowback point. I remember years ago folks who would talk about some of the domains and folks who would talk about data warehouses as places where data goes to die, you know. And I am seeing an influx, we discussed this a little bit on this show a couple of weeks ago. We were talking about disposing of data and rediscovery of things like that.

We have a cover story coming up on information management next month. It’s about really the risk and the cost of data and you know I was trying to come with a clever subtitles for this and there were things like the invisible business trash, but things like that, or virtual trash, where we really have become consumers, and there is so much data that at the same time now we are starting stream so much data.

Geez, what are we actually using and keeping and boy, you know, it really does kind of raise that governance figure again of how are we rationalizing what we are doing because we are seem to grab everything in the world out there. And boy, this is an another reflection of the fact that we are not managing it, and that we are exposing ourselves to risk of discovery or the cost of carrying data we have never even used.

Balancing Data Volume and Cost

David Inbar: I think that’s a very, very valid concern, and I think that’s going to get more and more attention. As you are talking about that, I am also thinking of classic use case or many use cases that we see where a lot of companies are still somewhat behind the way we are talking about. And it's an interesting case recently of a telecom service provider, and you would think that they had a lot of this down, but they have call detail records flowing through their phone switches all day long.

And interestingly enough, those gets churned off the switches every five minutes or so but it takes them matter of hours to actually flow the relevant information and all the details that they would like to have into their decision support system. So they are constantly looking in the rearview mirror and reacting to situations after they have happened. And so what they need, going back to your opening, to some of the opening statements about parallelism, is harnessing a little bit of the parallelism that’s actually sitting unused within their service today to dramatically speed up that process of getting data into some form that’s usable.

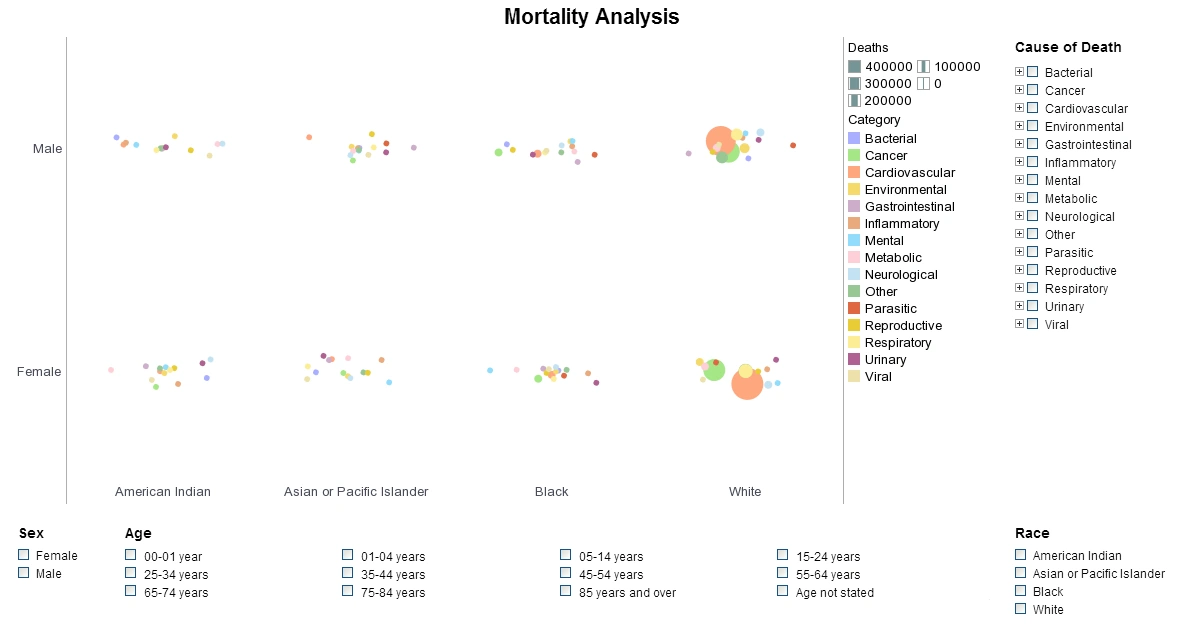

And then into that self-service reporting environment you are talking about where the operations folks can actually really see things happening more or less in real time and potentially drill down and then see bigger amounts of data alongside, and say well I see this spike over here, but you know what did that, you know, what was happening at exactly the same time a week ago or a year ago, and then we had a spike then, and they can answer the question, is this normal or this is abnormal?